As we stand in September 2023, the panorama of Large Language Models (LLMs) continues to be witnessing the rise of fashions together with Alpaca, Falcon, Llama 2, GPT-4, and plenty of others.

A pivotal facet of leveraging the potential of those LLMs lies within the fine-tuning course of, a method that enables for the customization of pre-trained fashions to cater to particular duties with precision. It is thru this fine-tuning that these fashions can really align with individualized necessities, providing options which can be each revolutionary and tailor-made to distinctive wants.

However, it’s important to notice that not all fine-tuning avenues are created equal. For occasion, accessing the fine-tuning capabilities of the GPT-4 comes at a premium, requiring a paid subscription that’s comparatively dearer in comparison with different choices obtainable available in the market. On the opposite hand, the open-source area is bustling with options that provide a extra accessible pathway to harnessing the ability of huge language fashions. These open-source choices democratize entry to superior AI know-how, fostering innovation and inclusivity within the quickly evolving AI panorama.

Why is LLM fine-tuning necessary?

LLM fine-tuning is greater than a technical enhancement; it’s a essential facet of LLM mannequin improvement that enables for a extra particular and refined utility in varied duties. Fine-tuning adjusts the pre-trained fashions to raised swimsuit particular datasets, enhancing their efficiency specifically duties and guaranteeing a extra focused utility. It brings forth the exceptional potential of LLMs to adapt to new information, showcasing flexibility that’s very important within the ever-growing curiosity in AI functions.

Fine-tuning massive language fashions opens up loads of alternatives, permitting them to excel in particular duties starting from sentiment evaluation to medical literature evaluations. By tuning the bottom mannequin to a selected use case, we unlock new prospects, enhancing the mannequin’s effectivity and accuracy. Moreover, it facilitates a extra economical utilization of system sources, as fine-tuning requires much less computational energy in comparison with coaching a mannequin from scratch.

As we go deeper into this information, we’ll focus on the intricacies of LLM fine-tuning, supplying you with a complete overview that’s primarily based on the most recent developments and finest practices within the subject.

Instruction-Based Fine-Tuning

The fine-tuning section within the Generative AI lifecycle, illustrated within the determine beneath is characterised by the combination of instruction inputs and outputs, coupled with examples of step-by-step reasoning. This method facilitates the mannequin in producing responses that aren’t solely related but additionally exactly aligned with the particular directions fed into it. It is throughout this section that the pre-trained fashions are tailored to resolve distinct duties and use circumstances, using personalised datasets to reinforce their performance.

Single-Task Fine-Tuning

Single-task fine-tuning focuses on honing the mannequin’s experience in a selected activity, akin to summarization. This method is especially helpful in optimizing workflows involving substantial paperwork or dialog threads, together with authorized paperwork and buyer help tickets. Remarkably, this fine-tuning can obtain vital efficiency enhancements with a comparatively small set of examples, starting from 500 to 1000, a distinction to the billions of tokens utilized within the pre-training section.

Foundations of LLM Fine-Tuning LLM : Transformer Architecture and Beyond

The journey of understanding LLM fine-tuning begins with a grasp of the foundational components that represent massive language fashions. At the center of those fashions lies the transformer structure, a neural community that leverages self-attention mechanisms to prioritize the context of phrases over their proximity in a sentence. This revolutionary method facilitates a deeper understanding of distant relationships between tokens within the enter.

As we navigate by means of the intricacies of transformers, we encounter a multi-step course of that begins with the encoder. This preliminary section includes tokenizing the enter and creating embedding vectors that characterize the enter and its place within the sentence. The subsequent levels contain a collection of calculations utilizing matrices often known as Query, Value, and Key, culminating in a self-attention rating that dictates the concentrate on totally different elements of the sentence and varied tokens.

Fine-tuning stands as a essential section within the improvement of LLMs, a course of that entails making delicate changes to realize extra fascinating outputs. This stage, whereas important, presents a set of challenges, together with the computational and storage calls for of dealing with an enormous variety of parameters. Parameter Efficient Fine-Tuning (PEFT) supply strategies to cut back the variety of parameters to be fine-tuned, thereby simplifying the coaching course of.

LLM Pre-Training: Establishing a Strong Base

In the preliminary levels of LLM improvement, pre-training takes middle stage, using over-parameterized transformers because the foundational structure. This course of includes modeling pure language in varied manners akin to bidirectional, autoregressive, or sequence-to-sequence on large-scale unsupervised corpora. The goal right here is to create a base that may be fine-tuned later for particular downstream duties by means of the introduction of task-specific goals.

A noteworthy development on this sphere is the inevitable improve within the scale of pre-trained LLMs, measured by the variety of parameters. Empirical information constantly exhibits that bigger fashions coupled with extra information nearly all the time yield higher efficiency. For occasion, the GPT-3, with its 175 billion parameters, has set a benchmark in producing high-quality pure language and performing a wide selection of zero-shot duties proficiently.

Fine-Tuning: The Path to Model Adaptation

Following the pre-training, the LLM undergoes fine-tuning to adapt to particular duties. Despite the promising efficiency proven by in-context studying in pre-trained LLMs akin to GPT-3, fine-tuning stays superior in task-specific settings. However, the prevalent method of full parameter fine-tuning presents challenges, together with excessive computational and reminiscence calls for, particularly when coping with large-scale fashions.

For massive language fashions with over a billion parameters, environment friendly administration of GPU RAM is pivotal. A single mannequin parameter at full 32-bit precision necessitates 4 bytes of house, translating to a requirement of 4GB of GPU RAM simply to load a 1 billion parameter mannequin. The precise coaching course of calls for much more reminiscence to accommodate varied elements together with optimizer states and gradients, doubtlessly requiring as much as 80GB of GPU RAM for a mannequin of this scale.

To navigate the restrictions of GPU RAM, quantization is used which is a method that reduces the precision of mannequin parameters, thereby reducing reminiscence necessities. For occasion, altering the precision from 32-bit to 16-bit can halve the reminiscence wanted for each loading and coaching the mannequin. Later on this text. we’ll study Qlora which makes use of the quantization idea for tuning.

Exploring the Categories of PEFT Methods

In the method of absolutely fine-tuning Large Language Models, it is very important have a computational setup that may effectively deal with not simply the substantial mannequin weights, which for essentially the most superior fashions at the moment are reaching sizes within the a whole bunch of gigabytes, but additionally handle a collection of different essential components. These embrace the allocation of reminiscence for optimizer states, managing gradients, ahead activations, and facilitating short-term reminiscence throughout varied levels of the coaching process.

Additive Method

This kind of tuning can increase the pre-trained mannequin with extra parameters or layers, specializing in coaching solely the newly added parameters. Despite rising the parameter rely, these strategies improve coaching time and house effectivity. The additive methodology is additional divided into sub-categories:

- Adapters: Incorporating small absolutely related networks put up transformer sub-layers, with notable examples being AdaMix, KronA, and Compactor.

- Soft Prompts: Fine-tuning a section of the mannequin’s enter embeddings by means of gradient descent, with IPT, prefix-tuning, and WARP being distinguished examples.

- Other Additive Approaches: Include strategies like LeTS, AttentionFusion, and Ladder-Side Tuning.

Selective Method

Selective PEFTs fine-tune a restricted variety of high layers primarily based on layer kind and inner mannequin construction. This class consists of strategies like BitFit and LN tuning, which concentrate on tuning particular components akin to mannequin biases or specific rows.

Reparametrization-based Method

These strategies make the most of low-rank representations to cut back the variety of trainable parameters, with essentially the most famend being Low-Rank Adaptation or LoRA. This methodology leverages a easy low-rank matrix decomposition to parameterize the burden replace, demonstrating efficient fine-tuning in low-rank subspaces.

1) LoRA (Low-Rank Adaptation)

LoRA emerged as a groundbreaking PEFT method, launched in a paper by Edward J. Hu and others in 2021. It operates throughout the reparameterization class, freezing the unique weights of the LLM and integrating new trainable low-rank matrices into every layer of the Transformer structure. This method not solely curtails the variety of trainable parameters but additionally diminishes the coaching time and computational sources necessitated, thereby presenting a extra environment friendly different to full fine-tuning.

To comprehend the mechanics of LoRA, one should revisit the transformer structure the place the enter immediate undergoes tokenization and conversion into embedding vectors. These vectors traverse by means of the encoder and/or decoder segments of the transformer, encountering self-attention and feed-forward networks whose weights are pre-trained.

LoRA makes use of the idea of Singular Value Decomposition (SVD). Essentially, SVD dissects a matrix into three distinct matrices, one among which is a diagonal matrix housing singular values. These singular values are pivotal as they gauge the importance of various dimensions within the matrices, with bigger values indicating greater significance and smaller ones denoting lesser significance.

This method permits LoRA to take care of the important traits of the information whereas decreasing the dimensionality, therefore optimizing the fine-tuning course of.

LoRA intervenes on this course of, freezing all authentic mannequin parameters and introducing a pair of “rank decomposition matrices” alongside the unique weights. These smaller matrices, denoted as A and B, bear coaching by means of supervised studying, a course of delineated in earlier chapters.

The pivotal ingredient on this technique is the parameter referred to as rank (‘r’), which dictates the dimensions of the low-rank matrices. A meticulous collection of ‘r’ can yield spectacular outcomes, even with a smaller worth, thereby making a low-rank matrix with fewer parameters to coach. This technique has been successfully carried out utilizing open-source libraries akin to HuggingFace Transformers, facilitating LoRA fine-tuning for varied duties with exceptional effectivity.

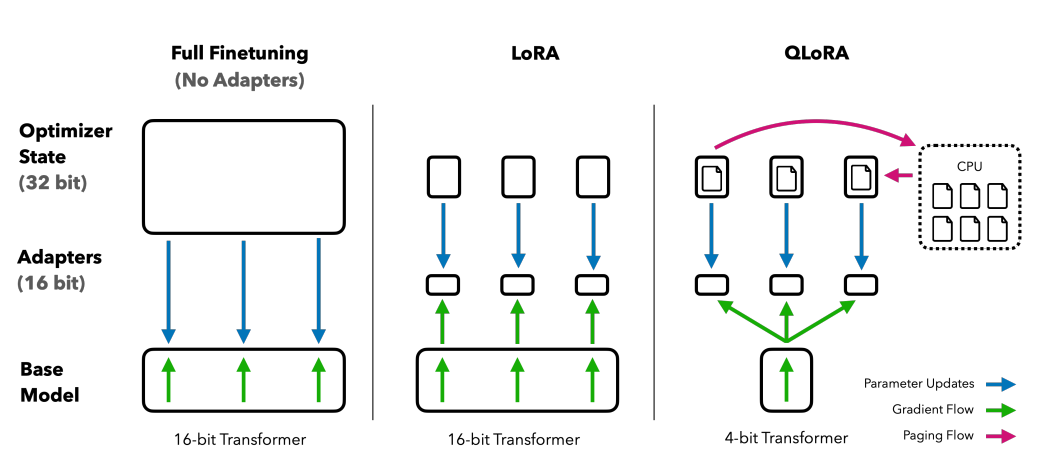

2) QLoRA: Taking LoRA Efficiency Higher

Building on the inspiration laid by LoRA, QLoRA additional minimizes reminiscence necessities. Introduced by Tim Dettmers and others in 2023, it combines low-rank adaptation with quantization, using a 4-bit quantization format termed NormalFloat or nf4. Quantization is actually a course of that transitions information from the next informational illustration to 1 with much less info. This method maintains the efficacy of 16-bit fine-tuning strategies, dequantizing the 4-bit weights to 16-bits as necessitated throughout computational processes.

Comparing finetuning strategies: QLORA enhances LoRA with 4-bit precision quantization and paged optimizers for reminiscence spike administration

QLoRA leverages NumericFloat4 (nf4), concentrating on each layer within the transformer structure, and introduces the idea of double quantization to additional shrink the reminiscence footprint required for fine-tuning. This is achieved by performing quantization on the already quantized constants, a method that averts typical gradient checkpointing reminiscence spikes by means of the utilization of paged optimizers and unified reminiscence administration.

Guanaco, which is a QLORA-tuned ensemble, units a benchmark in open-source chatbot options. Its efficiency, validated by means of systematic human and automatic assessments, underscores its dominance and effectivity within the subject.

The 65B and 33B variations of Guanaco, fine-tuned using a modified model of the OASST1 dataset, emerge as formidable contenders to famend fashions like ChatGPT and even GPT-4.

Fine-tuning utilizing Reinforcement Learning from Human Feedback

Reinforcement Learning from Human Feedback (RLHF) comes into play when fine-tuning pre-trained language fashions to align extra intently with human values. This idea was launched by Open AI in 2017 laying the inspiration for enhanced doc summarization and the event of InstructGPT.

At the core of RLHF is the reinforcement studying paradigm, a sort of machine studying method the place an agent learns how you can behave in an setting by performing actions and receiving rewards. It’s a steady loop of motion and suggestions, the place the agent is incentivized to make decisions that can yield the best reward.

Translating this to the realm of language fashions, the agent is the mannequin itself, working throughout the setting of a given context window and making choices primarily based on the state, which is outlined by the present tokens within the context window. The “motion house” encompasses all potential tokens the mannequin can select from, with the aim being to pick out the token that aligns most intently with human preferences.

The RLHF course of leverages human suggestions extensively, using it to coach a reward mannequin. This mannequin performs a vital position in guiding the pre-trained mannequin through the fine-tuning course of, encouraging it to generate outputs which can be extra aligned with human values. It is a dynamic and iterative course of, the place the mannequin learns by means of a collection of “rollouts,” a time period used to explain the sequence of states and actions resulting in a reward within the context of language era.

One of the exceptional potentials of RLHF is its potential to foster personalization in AI assistants, tailoring them to resonate with particular person customers’ preferences, be it their humorousness or every day routines. It opens up avenues for creating AI techniques that aren’t simply technically proficient but additionally emotionally clever, able to understanding and responding to nuances in human communication.

However, it’s important to notice that RLHF isn’t a foolproof answer. The fashions are nonetheless prone to producing undesirable outputs, a mirrored image of the huge and infrequently unregulated and biased information they’re educated on.

Conclusion

The fine-tuning course of, a essential step in leveraging the complete potential of LLMs akin to Alpaca, Falcon, and GPT-4, has turn into extra refined and centered, providing tailor-made options to a wide selection of duties.

We have seen single-task fine-tuning, which makes a speciality of fashions specifically roles, and Parameter-Efficient Fine-Tuning (PEFT) strategies together with LoRA and QLoRA, which intention to make the coaching course of extra environment friendly and cost-effective. These developments are opening doorways to high-level AI functionalities for a broader viewers.

Furthermore, the introduction of Reinforcement Learning from Human Feedback (RLHF) by Open AI is a step in the direction of creating AI techniques that perceive and align extra intently with human values and preferences, setting the stage for AI assistants that aren’t solely sensible but additionally delicate to particular person consumer’s wants. Both RLHF and PEFT work in synergy to reinforce the performance and effectivity of Large Language Models.

As companies, enterprises, and people look to combine these fine-tuned LLMs into their operations, they’re basically welcoming a future the place AI is greater than a instrument; it’s a companion that understands and adapts to human contexts, providing options which can be revolutionary and personalised.