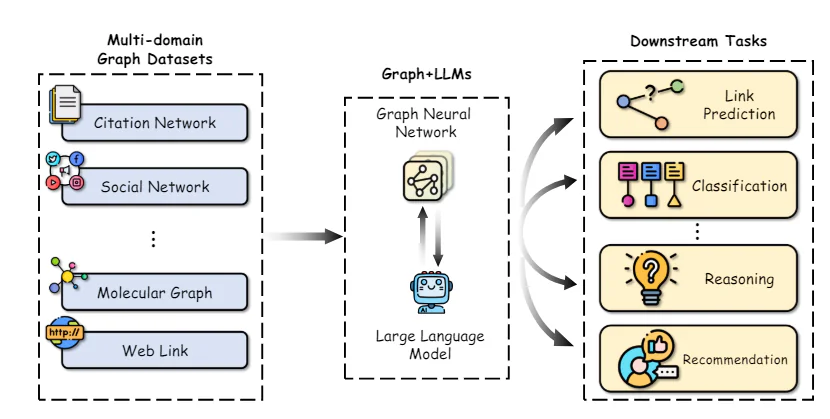

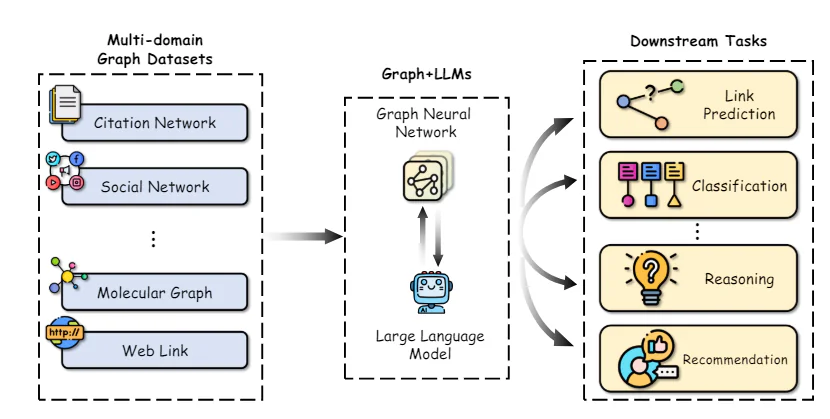

Graphs are knowledge constructions that symbolize advanced relationships throughout a variety of domains, together with social networks, information bases, organic techniques, and plenty of extra. In these graphs, entities are represented as nodes, and their relationships are depicted as edges.

The means to successfully symbolize and purpose about these intricate relational constructions is essential for enabling developments in fields like community science, cheminformatics, and recommender techniques.

Graph Neural Networks (GNNs) have emerged as a strong deep studying framework for graph machine studying duties. By incorporating the graph topology into the neural community structure via neighborhood aggregation or graph convolutions, GNNs can be taught low-dimensional vector representations that encode each the node options and their structural roles. This permits GNNs to attain state-of-the-art efficiency on duties comparable to node classification, hyperlink prediction, and graph classification throughout various software areas.

While GNNs have pushed substantial progress, some key challenges stay. Obtaining high-quality labeled knowledge for coaching supervised GNN fashions could be costly and time-consuming. Additionally, GNNs can wrestle with heterogeneous graph constructions and conditions the place the graph distribution at check time differs considerably from the coaching knowledge (out-of-distribution generalization).

In parallel, Large Language Models (LLMs) like GPT-4, and LLaMA have taken the world by storm with their unbelievable pure language understanding and era capabilities. Trained on huge textual content corpora with billions of parameters, LLMs exhibit exceptional few-shot studying talents, generalization throughout duties, and commonsense reasoning abilities that had been as soon as regarded as extraordinarily difficult for AI techniques.

The large success of LLMs has catalyzed explorations into leveraging their energy for graph machine studying duties. On one hand, the information and reasoning capabilities of LLMs current alternatives to boost conventional GNN fashions. Conversely, the structured representations and factual information inherent in graphs could possibly be instrumental in addressing some key limitations of LLMs, comparable to hallucinations and lack of interpretability.

In this text, we are going to delve into the newest analysis on the intersection of graph machine studying and huge language fashions. We will discover how LLMs can be utilized to boost varied facets of graph ML, evaluation approaches to include graph information into LLMs, and talk about rising purposes and future instructions for this thrilling subject.

Graph Neural Networks and Self-Supervised Learning

To present the mandatory context, we are going to first briefly evaluation the core ideas and strategies in graph neural networks and self-supervised graph illustration studying.

Graph Neural Network Architectures

The key distinction between conventional deep neural networks and GNNs lies of their means to function instantly on graph-structured knowledge. GNNs comply with a neighborhood aggregation scheme, the place every node aggregates characteristic vectors from its neighbors to compute its personal illustration.

Numerous GNN architectures have been proposed with completely different instantiations of the message and replace capabilities, comparable to Graph Convolutional Networks (GCNs), GraphSAGE, Graph Attention Networks (GATs), and Graph Isomorphism Networks (GINs) amongst others.

More not too long ago, graph transformers have gained reputation by adapting the self-attention mechanism from pure language transformers to function on graph-structured knowledge. Some examples embody GraphormerTransformer, and GraphFormers. These fashions are capable of seize long-range dependencies throughout the graph higher than purely neighborhood-based GNNs.

Self-Supervised Learning on Graphs

While GNNs are highly effective representational fashions, their efficiency is usually bottlenecked by the shortage of enormous labeled datasets required for supervised coaching. Self-supervised studying has emerged as a promising paradigm to pre-train GNNs on unlabeled graph knowledge by leveraging pretext duties that solely require the intrinsic graph construction and node options.

Some frequent pretext duties used for self-supervised GNN pre-training embody:

- Node Property Prediction: Randomly masking or corrupting a portion of the node attributes/options and tasking the GNN to reconstruct them.

- Edge/Link Prediction: Learning to foretell whether or not an edge exists between a pair of nodes, typically based mostly on random edge masking.

- Contrastive Learning: Maximizing similarities between graph views of the identical graph pattern whereas pushing aside views from completely different graphs.

- Mutual Information Maximization: Maximizing the mutual info between native node representations and a goal illustration like the worldwide graph embedding.

Pretext duties like these enable the GNN to extract significant structural and semantic patterns from the unlabeled graph knowledge throughout pre-training. The pre-trained GNN can then be fine-tuned on comparatively small labeled subsets to excel at varied downstream duties like node classification, hyperlink prediction, and graph classification.

By leveraging self-supervision, GNNs pre-trained on giant unlabeled datasets exhibit higher generalization, robustness to distribution shifts, and effectivity in comparison with coaching from scratch. However, some key limitations of conventional GNN-based self-supervised strategies stay, which we are going to discover leveraging LLMs to deal with subsequent.

Enhancing Graph ML with Large Language Models

The exceptional capabilities of LLMs in understanding pure language, reasoning, and few-shot studying current alternatives to boost a number of facets of graph machine studying pipelines. We discover some key analysis instructions on this house:

A key problem in making use of GNNs is acquiring high-quality characteristic representations for nodes and edges, particularly after they comprise wealthy textual attributes like descriptions, titles, or abstracts. Traditionally, easy bag-of-words or pre-trained phrase embedding fashions have been used, which frequently fail to seize the nuanced semantics.

Recent works have demonstrated the facility of leveraging giant language fashions as textual content encoders to assemble higher node/edge characteristic representations earlier than passing them to the GNN. For instance, Chen et al. make the most of LLMs like GPT-3 to encode textual node attributes, exhibiting important efficiency positive factors over conventional phrase embeddings on node classification duties.

Beyond higher textual content encoders, LLMs can be utilized to generate augmented info from the unique textual content attributes in a semi-supervised method. TAPE generates potential labels/explanations for nodes utilizing an LLM and makes use of these as further augmented options. KEA extracts phrases from textual content attributes utilizing an LLM and obtains detailed descriptions for these phrases to enhance options.

By enhancing the standard and expressiveness of enter options, LLMs can impart their superior pure language understanding capabilities to GNNs, boosting efficiency on downstream duties.