AI has been a ubiquitous buzzword for years, and it is in every single place. Mobile SoCs and desktop processors alike have been together with AI acceleration for a few generations already, however what can a person do, domestically on their very own machine, with AI? Until comparatively not too long ago, not a lot, at the least instantly. Within the final yr, although, there have emerged some open-source initiatives that make working with AI domestically a way more accessible proposition.

Well, you cannot run it, precisely—at the least, not but, as a result of the information merely aren’t accessible. Still, Qualcomm printed a quick video (beneath) and a weblog put up boasting concerning the achievement. The video demonstrates a Snapdragon 8 Gen 2 powered machine utilizing a easy Stable Diffusion interface to generate a single 512×512-resolution picture in 20 steps in just below 15 seconds, which is extremely spectacular. To put that in perspective, the Snapdragon 8 Gen 2 processor within the demonstration machine attracts lower than ten watts of energy.

For comparability’s sake, a GeForce RTX 2060 card can draw as a lot as 200 watts to do the identical activity in solely about half the time. It’s honest to say that the GeForce card can do batches of some pictures with little lack of efficiency, however once more, we’re speaking a few machine that attracts twenty occasions as a lot energy—and nonetheless wants the entire remainder of a PC for help. In that context, Qualcomm’s achievement right here is nothing wanting astonishing.

We’ll grant the cellular chip firm its pace report, though the declare that that is the primary time anybody’s carried out this on Android wants qualification. Developer Ivon Huang bought Stable Diffusion engaged on a Snapdragon 865 some time again, however that hacky setup took an hour to make a single picture. Most possible it was operating purely on the Snapdragon’s 64-bit ARM CPUs, and never the newer SoC’s accelerators. It’s technically attainable to run Stable Diffusion on nearly any system using simply OpenCL however clearly it will not be environment friendly, and that is the place Qualcomm’s AI stack comes into play.

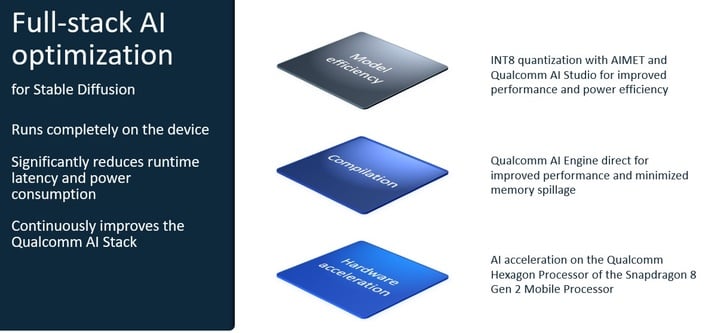

Indeed, as Qualcomm itself explains, that is solely attainable by means of “full-stack” optimizations. You see, picture technology utilizing Stable Diffusion is a many-step course of involving a couple of AI mannequin, and Qualcomm’s AI Research division needed to optimize the method for its Snapdragon SoCs alongside each a part of the trail. The most vital change was quantizing the open-source Stable Diffusion 1.5 mannequin from the FP32 datatype (favored by GPUs) to the lower-precision INT8 datatype.

Normally, this is able to have a deleterious impact on the outcomes you’d get from the mannequin, however Qualcomm says that utilizing AIMET—the “AI Model Efficiency Toolkit” quantization device—it is ready to enhance efficiency and save energy by decreasing the reminiscence bandwidth required for inferencing, with minimal affect on accuracy. In addition to the Stable Diffusion foundational mannequin, the opposite AIs utilized in picture technology, together with the textual content encoder and the variational autoencoder, have been additionally transformed. This was essential in order that the software program would match on the goal machine to start with.

Ultimately, the result’s represented within the demo video: actual AI picture technology with out cloud acceleration, operating instantly on a low-power handset. And it may be carried out with out an web connection, in case that is not clear. This is a formidable accomplishment, and completely worthy of observe.